Introduction

Objective

The objective of the HRM is to allow subtitle and caption authors and providers to verify that the content they provide does not exceed defined complexity levels, so that playback systems can render the content synchronized with the author-specified display times.

Playback systems include desktop computers, mobile devices and home theatre devices.

The HRM is not a new concept: a version of it has been included in all versions and editions of [[IMSC]]. This specification extracts the HRM into a standalone document to simplify maintenance. The First Public Working Draft of this specification essentially included the HRM as it was specified in [[ttml-imsc1.2]]. Substantive changes made since then are summarized in .

Why limit the complexity of IMSC Document Instances?

IMSC Document Instances are typically authored by a first party and rendered by a second party. Unless both parties agree on the maximum complexity of a IMSC Document Instance, it is likely that:

- an IMSC Document Instance authored by a first party will exceed the capabilities of the presentation processor of a second party, resulting in an incomplete presentation where some subtitles or captions might be missing or might be presented too late;

- a first party authors only extremely simple IMSC Document Instances in an attempt to ensure a complete presentation across all renderers, resulting in a lower quality presentation;

- a second party over-provisions their presentation processor in order to ensure a complete presentation of all IMSC Document Instances, resulting in increased run-time resource usage, code complexity, etc.; or

- one presentation processor becomes the de-facto reference to determine whether the presentation of a IMSC Document Instance will succeed, at the expense of other renderers and consistency of presentation.

As illustrated in , by defining a method (the HRM) to compute a proxy for the complexity of an IMSC Document Instance and specifying a complexity limit based on such proxy:

- authors can ensure that their IMSC Document Instances do not exceed this limit;

- implementers can design their presentation processors to maximize the likelihood that they will be able to render correctly all IMSC Document Instances that do not exceed this limit; and

- audiences can expect subtitle and caption presentation to match the authorial intention.

Why is the HRM needed to limit complexity?

The HRM supplements the syntactic and structural constraints imposed in [[IMSC]] by imposing constraints on the contents of the presentation.

Because of the temporal and spatial variability of subtitles and captions across types of content, territories and languages, it is not possible to limit the complexity of an IMSC Document Instance using only average values.

An average-based constraint of 840 characters per minute could be met in multiple ways, with different rendering complexities. Contrast two potential approaches:

In the first, 5 characters are presented for a fraction of a second, followed by 835 characters that are then presented for over 59 seconds. This generates a high rendering complexity for the 835 characters, since there is only a brief time available to paint them.

In the second, 210 characters are painted every 15 seconds, giving 15 seconds to prepare for the next presentation. This has a much lower rendering complexity.

The HRM achieves a more accurate representation of the complexity of an IMSC Document Instance at any given time by taking into account its past complexity in addition to its instantaneous complexity. The same approach is commonly used in video to limit bitstream complexity, e.g., the Hypothetical Reference Decoder (HRD) specified in [[iso14496-10]].

How does the HRM measure and limit complexity?

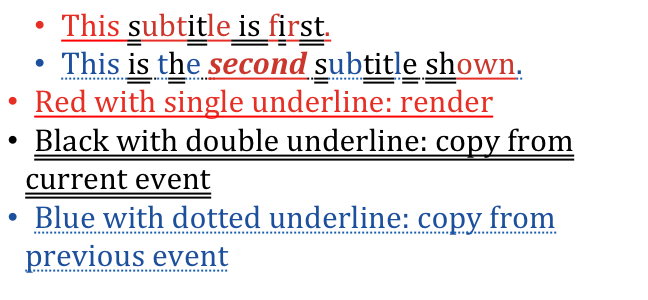

The HRM defines a simple model for the rendering of subtitles and captions, and uses the time it takes to render subtitles and captions according to that model as a proxy for the complexity of the subtitles and captions. Rendering includes drawing region backgrounds, rendering text and copying text. Complexity is then limited by requiring that the time to render one subtitle or caption is shorter than the time elapsed since the previous subtitle or caption.

This simple model requires only a static analysis of the IMSC Document Instance, requires no fetching of external resources and does not require the IMSC Document Instance to be actually rendered. Several simplifying assumptions are made to achieve this. For example, the model assumes that each character is drawn independently, and accounts for that assumption being, in many cases, false, by assigning different render speeds for different scripts. In general the model is not intended to capture the actual time that an implementation takes to render subtitles and captions, but rather scale with it: a document that is twice as complex according to the model would require roughly twice as many resources to actually render.

Where is the HRM used?

The HRM is typically used prior to distribution of the IMSC Document Instance to the end-user, as an integral part of authoring and as a quality check before distribution.

When the HRM is used, the consequences of an IMSC Document Instance exceeding the HRM limits depends on the context:

- an authoring system might, for example, flag the specific point in time where the HRM limits were exceeded;

- the ingest component of a streaming platform might outright reject the IMSC Document Instance.

The HRM is not intended to be used when the IMSC Document Instance is presented to end-users since:

- end-users are not concerned with a technical complexity measure, just as they are not concerned with video bit-rate, but instead with whether the presentation is successful;

- a presentation processor cannot generally reduce the complexity of a IMSC Document Instance without impacting the presentation.